Initial Project Data

| Domain Age | 14 years |

| Niche/Market | Education/writing service |

| GEO | EN-speaking countries, main target is the US |

| Target Language | 1 (EN) |

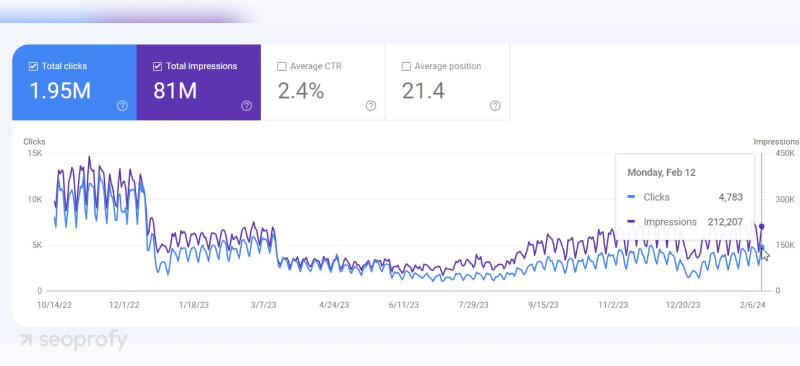

| Daily Organic Traffic (Before/After Google Core Updates) | 10K/2.2K |

| Backlink profile | 13.7K referring domains |

| Pages | 650K |

| Monthly Budget | $12000 |

Challenges

The client reached out to us to determine the reasons for an organic traffic drop after three Google Core Updates:

- December 2022 Link Spam Update

- December 2022 Helpful Content Update

- March 2023 Core Update

The company also wanted to regain its rankings for priority queries.

The client had its own SEO team but needed a second opinion and additional expertise to restore the lost traffic.

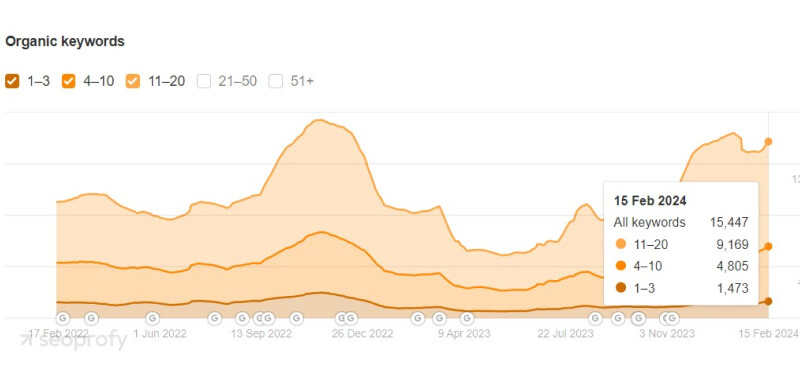

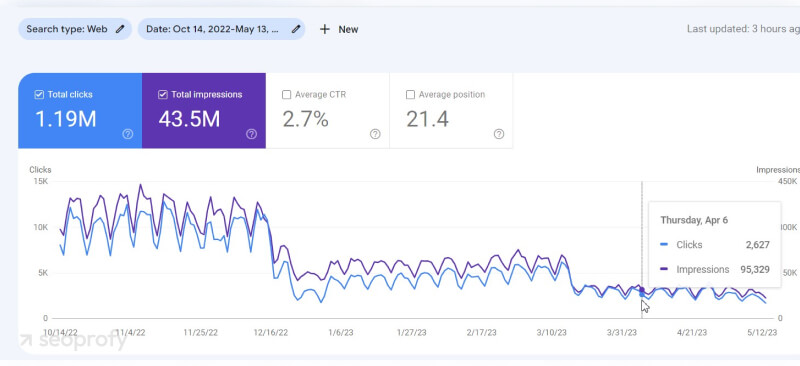

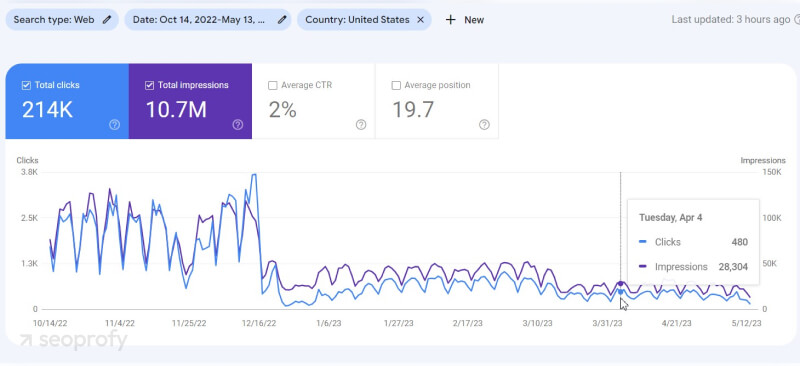

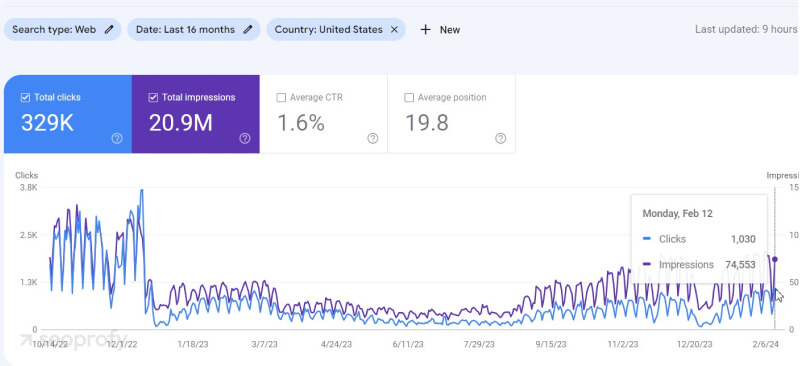

Before the first drop, the website had 8-11K organic visitors a day (2-3K from the US). After the second drop in March 2023, the website had 2-2.5K organic visitors a day (400-500 visitors a day from the US). Our cooperation started in July 2023.

Google Search Console (all countries):

Google Search Console (USA):

It’s also worth noting that the traffic in this niche is seasonal. The highest activity levels are observed at the end of each academic semester (November-December, April-May).

To address these challenges, we divided our working process into two main stages:

- Analyzing the current situation, determining the problems, and creating an action plan

- Implementing the optimization strategy.

Main Issues

Technical

- 650K pages, of which only ~30K were indexed

- A large number of 404 pages (almost 17% of all website pages)

- Chaotic internal linking

- A lack of or duplicated titles/meta descriptions/H1 tags

- Issues with mobile optimization

Content

- Duplicated pages and several pages created for one search intent

- Keyword over- and under-optimization: some keywords were not used at all, and some were overused

- Informational content was used on landing pages

- Weak content structure

Link Building

- Many low-quality links

- No coherent link building strategy

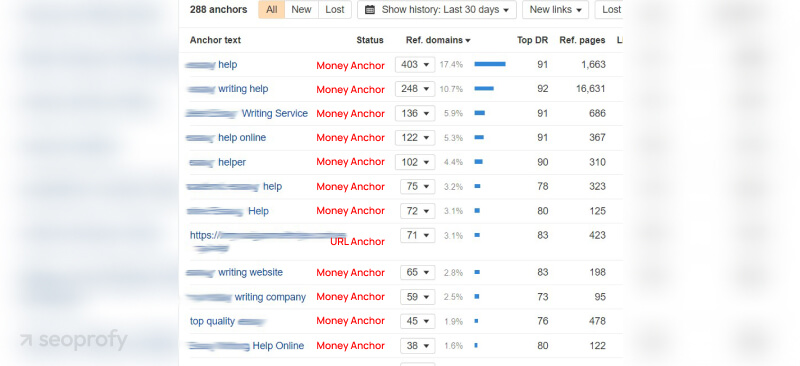

- Many links to landing pages with similar money anchor texts, and for some pages, 70% of backlinks had the same commercial anchor text type

Project Strategy Creation

We started by performing thorough audits to determine the current state of the website and clearly define all the possible issues. This allowed us to prioritize the problems according to their importance and create a comprehensive project strategy.

Technical SEO Audit

First, we performed a detailed technical audit of the website. These were the main issues we found:

- Duplicated H1 tags and meta tags (titles, descriptions)

- Many internal links pointed to pages with 4xx and 3xx response codes

- The website had a separate US subdirectory, /us/, while the main domain wasn’t targeted to any specific country, using hreflang=x-default

- Orphan pages (with internal links only from the sitemap)

- Issues with the mobile version

- Crawlability issues.

After the technical audit, we created a task list, sorted the tasks according to their priority level, and set the executor for each task.

Content Audit

During the content audit, we analyzed the general state of the content on the website and took a closer look at the content on high-priority pages. As a result, we found the following problems:

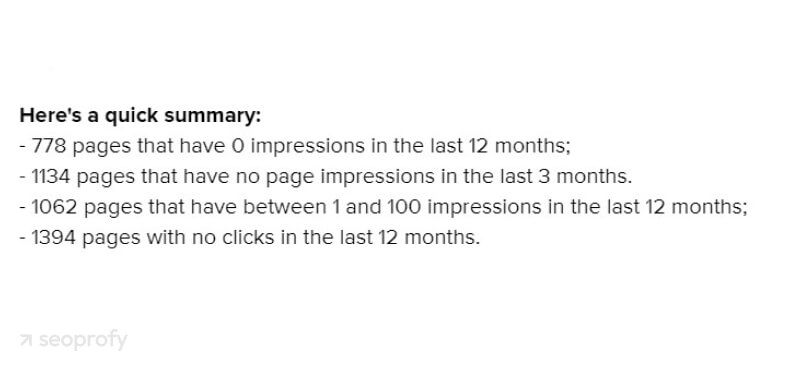

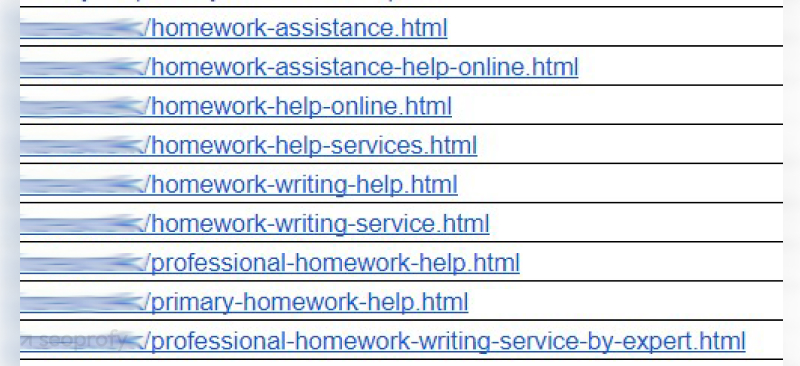

- There were numerous zombie pages:

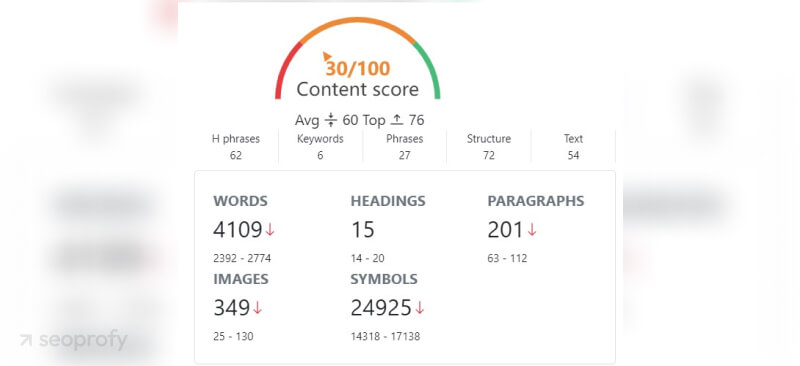

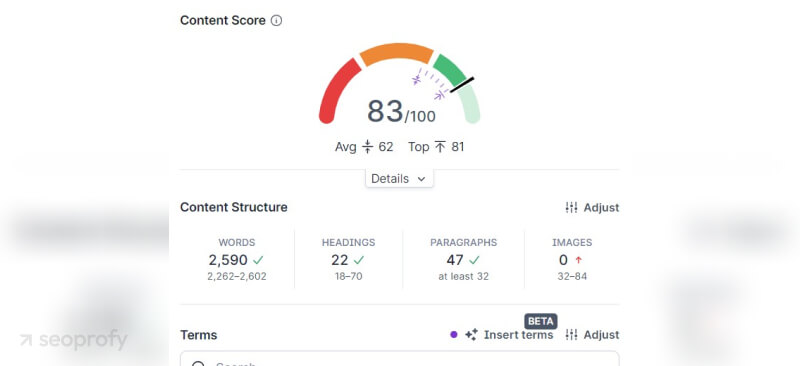

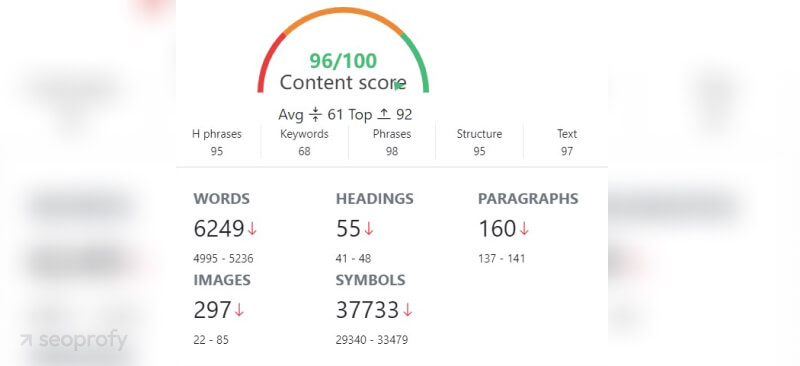

- Content had poor SEO scores. Most of the high-priority pages had a low content quality score according to our internal tool, SearchAnalytics.ai:

- Informational content was used on commercial pages that had product structured data, which is not relevant to informational content.

- The content was not well-structured. The pages looked like walls of text. They lacked many necessary page elements that were present on competitors’ pages (for example, advantages, prices, instructions on how to place an order, etc.).

- Numerous duplicated pages created for the same search intent.

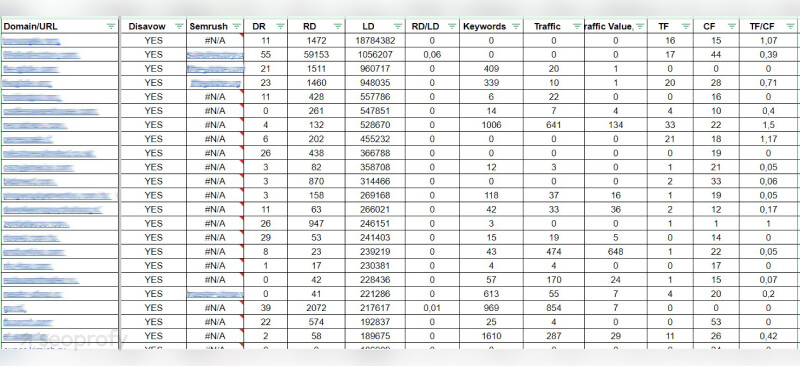

Backlink Audit

During the backlink audit, we analyzed the client’s backlink profile and compared it with that of its competitors. Here is what we found:

- An aggressive link building strategy: For the majority of the website's commercial pages, more than 80% of the backlinks featured money anchor text:

- Many low-quality links: Only 300 domains out of 13K were high-quality. Most links were placed on Web 2.0 sites, forums, etc.

Action Plan

After the audits and competitor analysis, we created an SEO strategy and a clear action plan in which we prioritized the most critical issues on the website. The main tasks were:

- Solve the main technical problems on the website to improve its indexation.

- Decide on the separate /us/ subdirectory and give recommendations on the hreflang annotation settings.

- Perform keyword research to expand and optimize the site structure.

- Remove duplicated pages and pages with low-quality content.

- Prepare recommendations for content creation.

- Disavow low-quality backlinks and create a new link building strategy.

Implementation

Technical Issues

In order to fix the technical issues, we prepared a brief for each task for developers on how to solve each problem. Here’s what we achieved:

- We removed internal links to the pages with 3xx and 4xx response codes.

- We created meta tag templates for the site’s typical pages.

- We optimized the headings for high-priority pages.

- We optimized the structure of subheadings on the pages and removed excessive headings.

- We resolved mobile version issues (some elements were blocked in the robots.txt file).

- We provided recommendations regarding the automatic generation of the sitemap and the rules for updating it and adding pages.

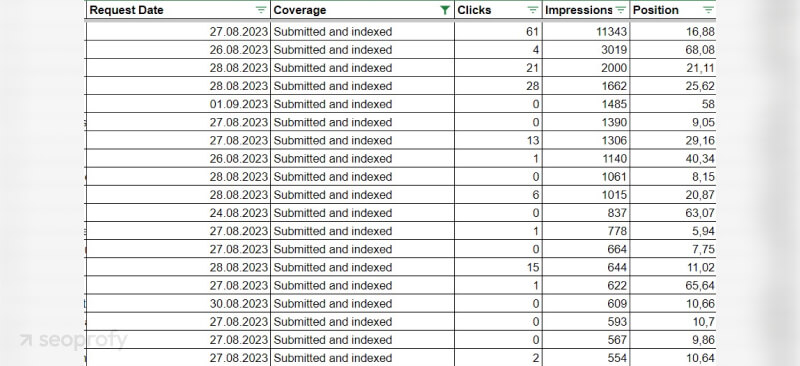

- To solve the indexation issues, we suggested configuring Google Index API for daily page indexation (by submitting a request to Google, we increased the quote from 200 to 600 pages). Some pages even started getting clicks and impressions:

Locale-Specific Subdirectories

The website had over 30 language versions implemented through subdirectories. The main domain had the hreflang=x-default attribute, which led to a majority of the website pages cannibalizing each other, as both pages within the /us/ subdirectory and ones from the main domain were ranking in the US.

We decided to:

- Target the main version of the website to the US. The /us/ subdirectory was redirected to the main domain.

- Recommend removing the locale-specific versions of the site that didn’t have any traffic.

Keyword Research and Website Structure Optimization

This stage was the most time-consuming, as we needed to research and analyze keywords for more than 200 different clusters. The semantics we gathered allowed us to understand the following:

- Which pages were not present on the client’s website and needed to be added

- Which pages needed to be merged (because they had the same intent)

- How to prioritize clusters to create an improved site structure and plan further optimization.

Based on the keyword research, we changed the primary navigation menu and the footer menu because the navigation bar was overloaded — it contained almost every page on the website.

Here’s what we did:

- Divided the primary navigation menu according to the types of services

- Added first-priority landing pages to the primary menu

- Removed irrelevant pages from the primary navigation menu that could distract the user from converting

- Expanded and improved the structure of the footer

- Added second-priority pages to the footer. This way, we avoided duplicating pages in the primary menu and the footer.

Internal Linking

To improve the internal linking on the website, we suggested adding an internal linking section to the second-level pages. A separate list of URLs was created for each category of services and added to the “Other Services” section. This helped us improve the indexation of the second- and third-level pages and increase the internal equity of priority pages.

Link Building

First, we analyzed all the backlinks of the website. The total number of links was about 20K domains according to the data from Ahrefs, Semrush, and Google Search Console.

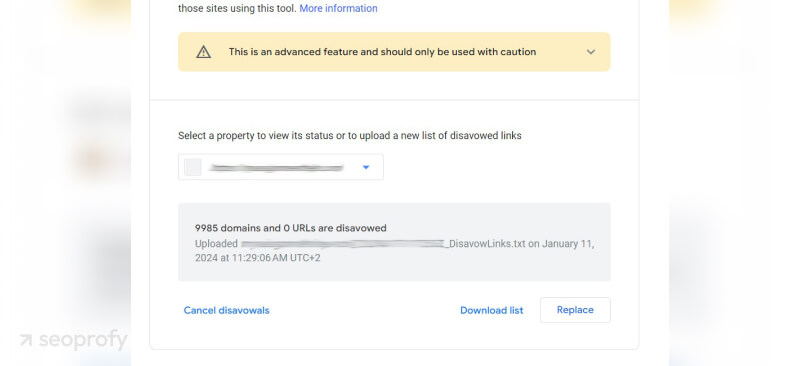

Using information from several sources, we analyzed the backlinks and created a list of links to disavow with the Google Disavow Tool:

[blockquote cite="Andrew Shum, Head of SEO"]

Disavowing many low-quality links at once can negatively affect organic traffic dynamics. Even “bad” backlinks can have a small positive impact on link building for a website because low scores in SEO tools do not necessarily mean that search engines consider these links bad. In general, it’s better to first build quality links and then start disavowing low-quality ones.

[/blockquote]

Thus, we submitted them to the Google Disavow Tool gradually. Every 3–4 weeks, we disavowed 1.5-2K low-quality links. In total, we disavowed almost 10K domains:

We also created a link building strategy that was focused on quality and not quantity:

- We provided the client’s link building team with clear requirements and criteria for choosing domains.

- We changed their approach to link building: abandoned Web 2.0 websites completely, minimized forum and profile backlinks, suggested placing backlinks on home pages, and recommended focusing on quality outreach and link insertion strategies.

- We created an anchor text strategy for all high-priority pages.

As a result, the number of high-quality backlinks started growing, which also correlated with organic traffic growth. On this screenshot, you can see the backlink growth dynamics according to Ahrefs ('Best Links'):

Content Optimization

First of all, we needed to remove numerous duplicated and low-quality pages from the website. Here’s what we did:

- We deleted the “News” section completely, as it didn’t have any traffic or views;

- We removed duplicates of the “Tools” pages from locale-specific versions of the site. As analyzed, such queries are not locale-specific.

- We analyzed pages that had no organic traffic in more than 12 months and removed most of them. The majority of these articles were written back in 2015. Pages that could potentially get traffic were added to the content optimization plan.

- We moved the informational content from commercial landing pages to the “Blog” section.

For every landing page, we created clear instructions with the help of Surfer SEO and SearchAnalytics.ai. As a result, we significantly improved the content quality from a technical point of view. The main task was not to just get high scores but to improve the quality of the content and the use of keywords:

Results

With this strategy, we restored more than 50% of the website’s organic traffic. We solved critical issues that influenced the performance of the website and created a clear action plan that will help the client move in the right direction.

The website showed considerable organic traffic growth:

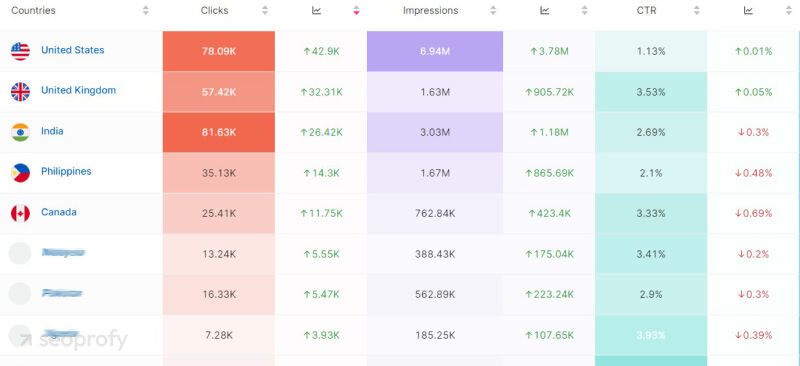

- All countries: from 2,2K visitors a day (in season) to 4,7K (off-season)

- US: From 480 visitors a day (in season) to 1K (off-season)

The number of clicks and other metrics practically doubled in the US and other countries:

The website’s organic keyword performance was also restored to the level it showed before the first traffic drop: