September 2022: Starting Point

| Domain Age | 20 years |

| Niche/Market | Trading |

| GEO | Worldwide |

| Target Language | 35+ |

| Daily Organic Traffic | 1.2K |

| Backlink profile | 1.4K Referring domains |

| Pages | 120K |

| Monthly Budget | $18000 |

Challenge

Our client's ambition was big: seek a solution to multiply target organic traffic by 5 over 2 years. With the increase in organic traffic, the client also expected a proportional increase in First Time Deposits.

The client contacted us after the site had been demoted with two updates:

- the August 2022 Helpful Content Update

- the September 2022 Core Update, which caused a severe traffic drop

It was right afterward that we jumped on this mission.

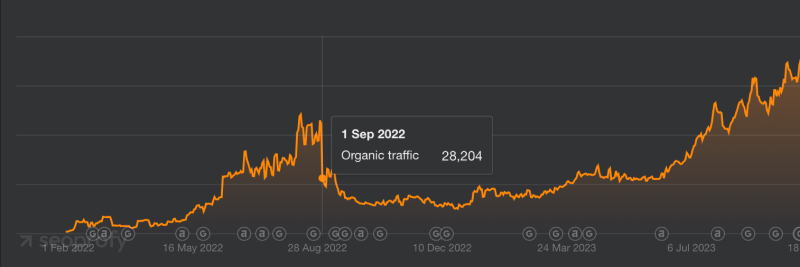

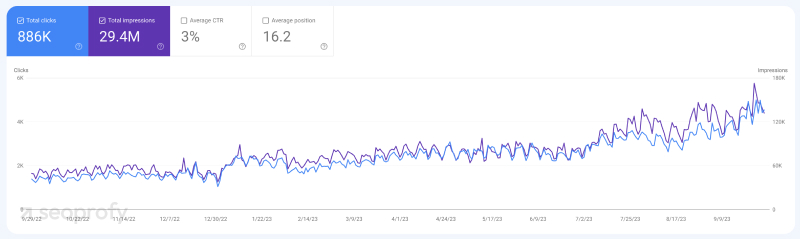

Ahrefs:

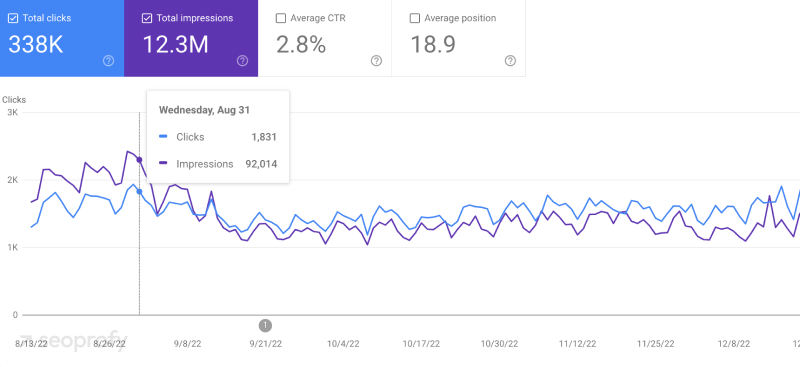

Google Search Console:

Main Issues

As early as the project launch stage and thanks to our preliminary audit, we pinpointed the following challenges:

| Technical |

|

| Content | Content was written without search engine optimization |

| Link Building | Backlinks were built only to the EN site version |

Team Setup

Based on the available data and the preliminary audit of the website, we handcrafted our team:

- 1 SEO team lead

- 2 Link builders

- 1 Project manager

When they started working on the project, all of them had prior experience in the trading niche.

Project Strategy

So, the most essential steps are niche analysis and an in-depth SEO audit that we carried out as soon as we set up the team.

Niche Analysis

We analyzed the niche in the priority GEOs. Based on this analysis, we selected the most relevant competitors. Further, a deeper analysis of these competitors made it possible to understand our main weaknesses:

- The site structure: certain categories that might bring a lot of organic traffic were missing. (At the same time, various competitors were strong in these categories.)

- Internal linking was significantly inferior to the logic and implementation quality compared to the top sites in the niche.

- The link building strategy was ineffective. Backlinks were built only to the EN version of the site with the same-type outreach and predominantly money anchors.

Technical SEO Audit

Together with the niche analysis, we conducted a technical SEO audit of the website. The audit revealed quite a few issues:

- Duplicate language versions of EN-CA, EN-US, and EN-AU, etc.

- Orphan pages (internal links to them were only in the sitemap) existed.

- Site loading issues affected Google Bot crawling and, as a result, page indexing.

- Footers and headers were practically unused, and priority pages were not in the menu.

- Due to poor internal linking, there were many 301 redirects and links that returned a 404-server response code.

Action Plan

Taking into account all the data obtained from the niche analysis and technical audit, an SEO strategy was formed. Since the scope of work was quite large, it was critical to prioritize all the tasks correctly. To see positive changes in rankings and organic traffic in the shortest possible time, we have drawn up the following action plan:

- Get rid of language versions’ duplicates.

- Create an optimal structure for the website based on the niche analysis.

- Fix and improve internal linking throughout the site.

- Optimize page loading speed and server performance in general.

- Increase website indexation to 95%+ of pages.

- Analyze existing content and prepare recommendations for further work with existing and new content.

- Add missing E-E-A-T elements (Experience, Expertise, Authoritativeness, and Trustworthiness, which are part of Google’s Search Quality Rater Guidelines).

- Start building links according to the updated link building strategy.

Execution

Duplicate content across language variants

To solve the issue of duplicate content, we chose the following approach: if the page was indexed and had traffic or contained high-quality external links, we added a 301 redirect to the relevant page of the basic language version.

Example:

site.com/en-us/page: a 301 redirect to site.com/page (EN is the base language of the site)

site.com/pt-br/page: a 301 redirect to site.com/pt/page

For all other cases, we set up the 410-server response code.

In a few weeks, we noticed improvement. You can easily see the traffic growth of the site's Portuguese language version in the screenshot below. Until then, there had been three duplicate versions of this language for three different countries.

Seocrawl:

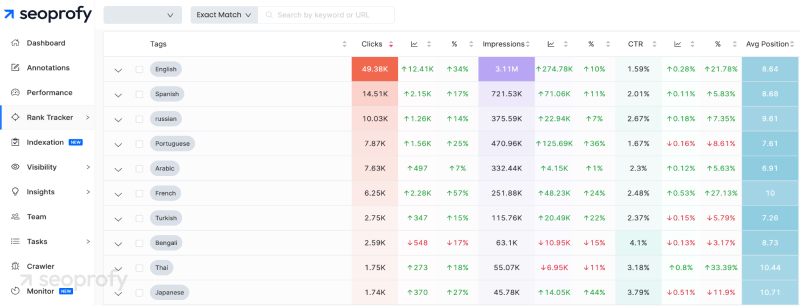

Our next step was to better understand the effectiveness of each language version. We also needed to verify the frequency of relevant search queries in each language for the priority pages.

To accomplish all this, we used the Seocrawl tool to create the appropriate tags for each language version. This allowed us to analyze data from the Google Search Console quickly and efficiently.

Here's the dashboard we used:

Structure and internal linking

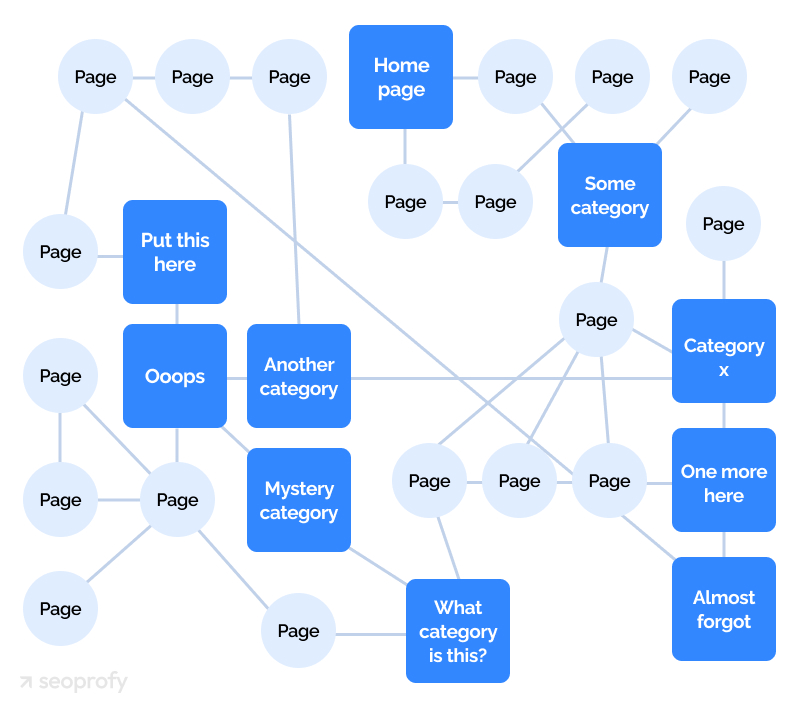

Next, we had to fix the existing structure of the site, which originally looked something akin to this:

We sorted all content into a silo structure, and the level of nesting needed wasn't complex at all. We used logical categories based on clustering and existing structures of competitors in the SERP’s TOP.

At the same time, we solved the issue of internal linking of the site.

We created an extensive and quite convenient and logical menu.

Priority categories and pages were also added to additional menus in the footer.

We developed and placed blocks with links to priority pages throughout the site.

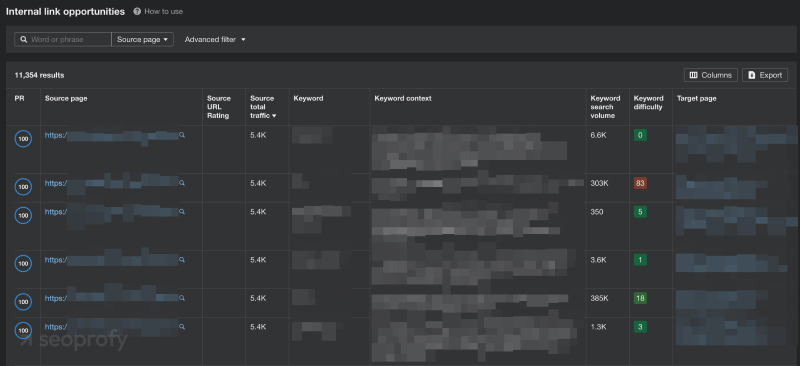

To improve the site's internal linking, we relied on several tools. These included:

Ahrefs Internal Link Opportunities:

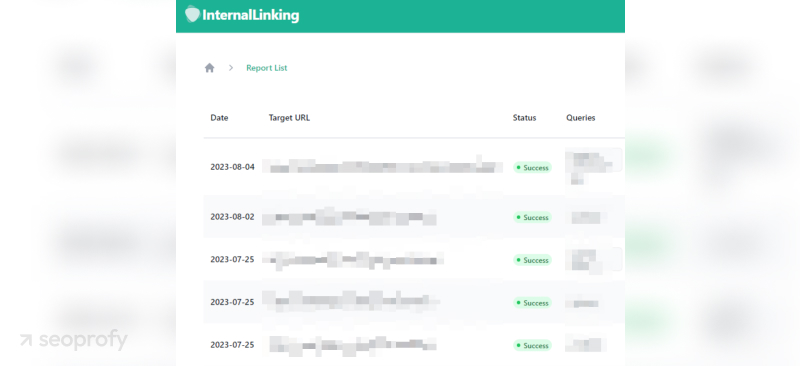

InternalLinking (SEOTesting.com):

We also created instructions for adding internal links to the content.

As a result, we got rid of all the orphan pages. In the process, we added sufficient internal links to the pages.

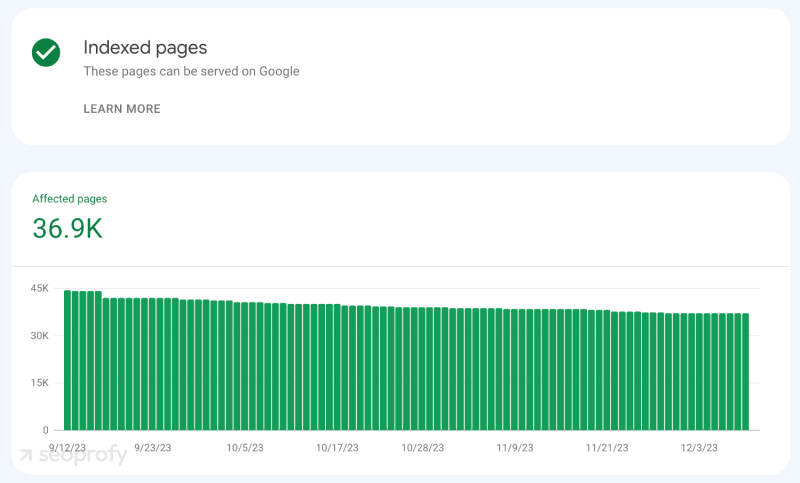

Indexation

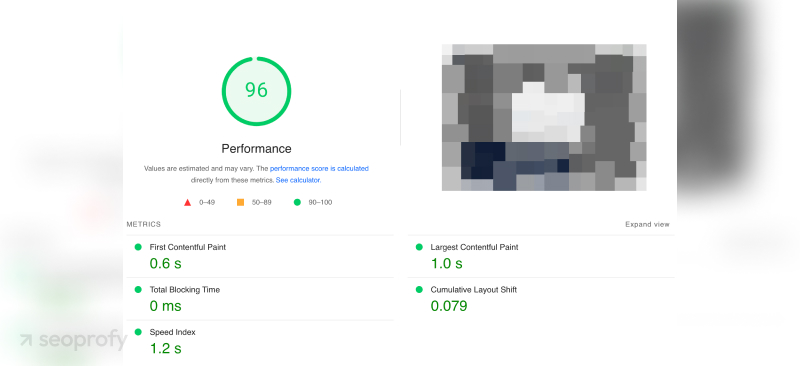

We had already partially improved indexing by optimizing internal linking. The next steps were:

- optimizing the loading speed of website pages

- optimizing server performance

- switching to newer image formats such as WebP

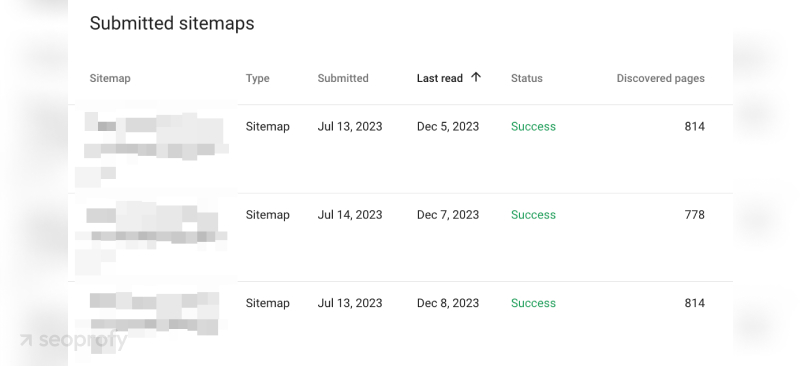

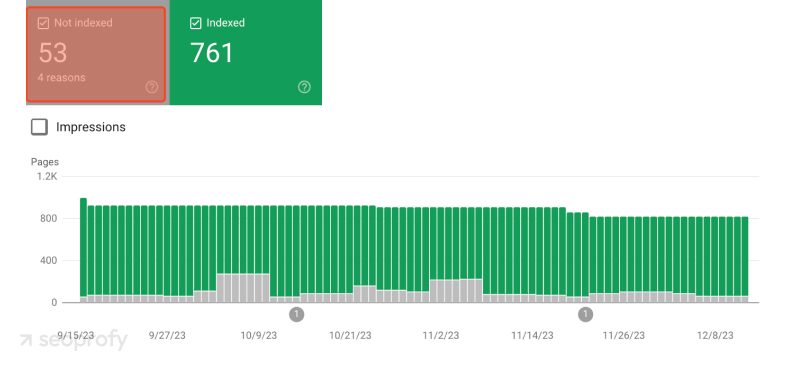

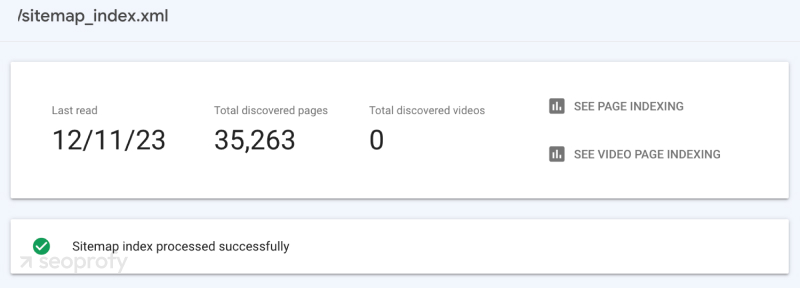

The sitemaps were divided so that none of them contained more than 1,000 pages.

We took this initiative to enable us to completely control indexing, as well as to export all non-indexed pages.

We then checked all these pages through the Google Indexing API to determine whether they were indeed really indexed. We sent all pages not listed as "submitted" or "indexed" for indexing via the Google Indexing API (within the daily allowable limit).

This way, we managed to achieve almost 100% indexing. Upon removing all duplicate language versions — as well as those that did not perform and had no prospects - there were about 35,000 pages.

All this resulted in a few more pages in the index, but some of them have yet to be removed after the latest changes to the site:

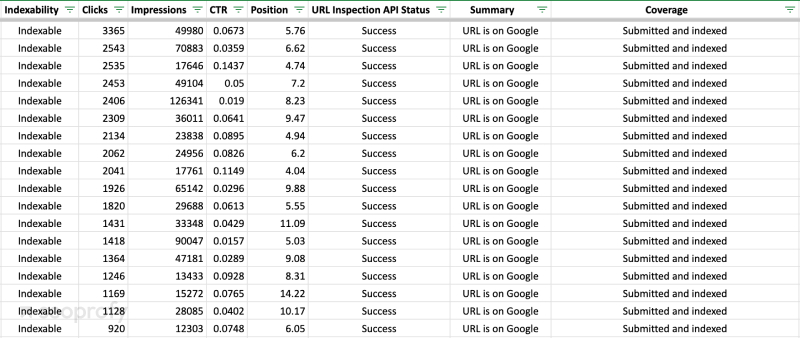

Content

First off, we needed to evaluate the effectiveness of all the existing content. To do this, we used the Screaming Frog + Google Search Console API to analyze the entire site.

This gave us data we needed on the effectiveness of each individual page and its indexing status.

We then analyzed these pages that were indexed but not receiving any clicks. Our team checked whether these pages had relevant search queries:

- If they did - and the page showed potential - we prepared a technical and content brief to rewrite or optimize the existing content.

- If there were no such search queries, the page had no traffic and no quality external links, the page was deleted and the 410 server response code was set.

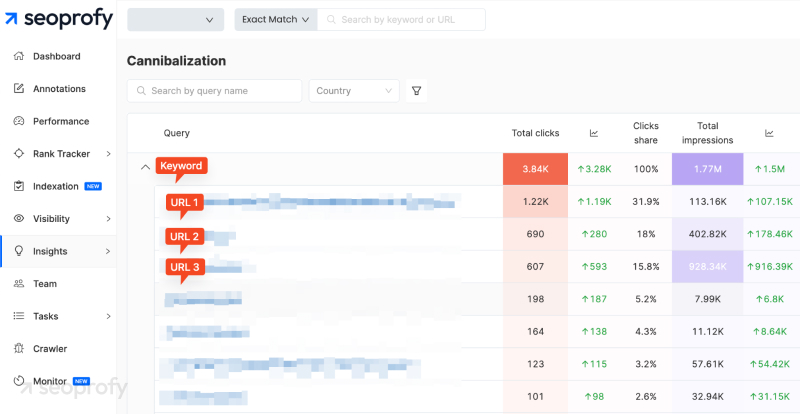

At the same time, we analyzed cannibalization. (As far as we recall, the content seemed to be written rather chaotically, without taking into account basic SEO practices.)

To achieve this, we used various tools such as Seocrawl and Seotesting:

Seocrawl:

We've developed instructions and recommendations for further development of new content.

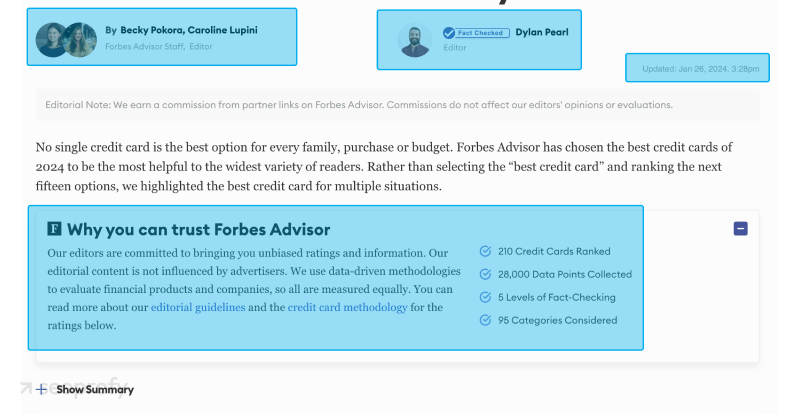

E-E-A-T

Note: Due to our NDA with this client, in this section, we're sharing examples from the websites of competitors in our niche.

Based on our analysis of the niche, we identified some elements that we referred to as E-E-A-T factors. Most of them were present in 80% of competitors in the top 10. Therefore, we decided to work on these elements on the website.

The elements we implemented include:

- the author of the article

- a short biography of the author along with links to their social networks

- a separate page for the author with a list of all their publications

- links to external resources for further reading

- appropriate micro-markup for the author

- the name of the editor/reviewer where applicable

- any updates to the date of the post/content

- why you can trust us

- description of our assessment methodology

- as needed: editorial guidelines, advertiser disclosure, risk disclosure, etc.

Here's an example from Forbes Advisor:

What we also accomplished:

- Addition of an "About Us" page

- A team introduction and the description of expertise in this niche

- A contact page with all necessary data

- Information about the creation of content by people, with a detailed description of how we conducted research and form ratings and recommendations.

Here is an example from the Internet:

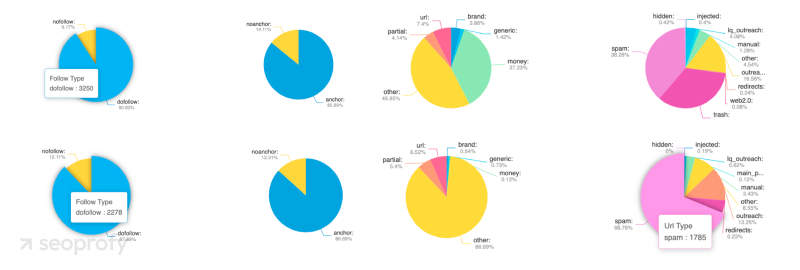

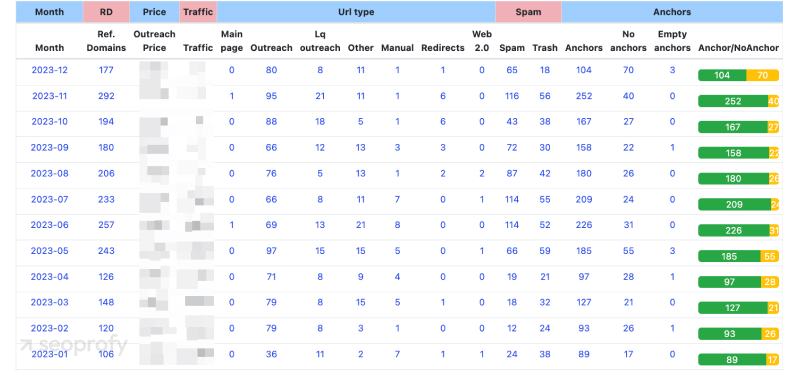

Link Building

Our process includes an overhaul of the entire link-building strategy. Here are a few highlights:

- We began building links to the pages of all the various language variants.

- We replaced backlinks from the more remote language variants (exotic) with those from niche microsites that we used as a replacement for classic outreach posts.

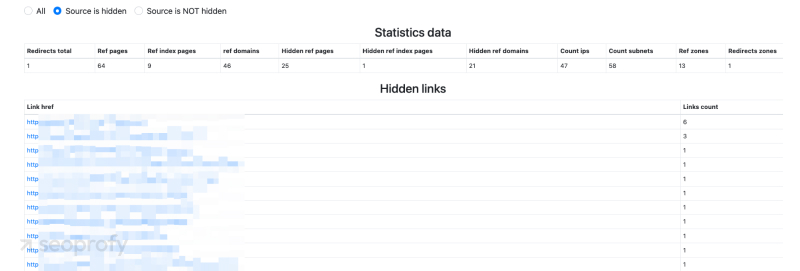

After a detailed analysis with SearchAnalytics (our in-house tool), we reviewed the approach to the correlation between anchor texts and naked URLs, as well as anchor texts that we used. We started using anchor texts to replace predominantly used commercial anchors.

We detected a lot of spammy links that distorted the analysis data. Taking into account all these factors, the updated strategy was optimal in terms of both safety and performance.

Our SearchAnalytics enabled us to find quite a few of the hidden links that we wouldn't have been able to identify through Ahrefs or similar services alone.

Together with the increase in links, we were adding all of them to our in-house LinkChecker service for tracking, indexing control, and deletion. We can also track if anyone tries to add new links to our posts through link insertions.

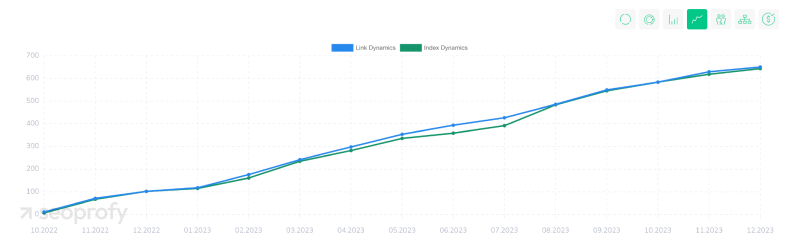

Check out the results over the last 12 months:

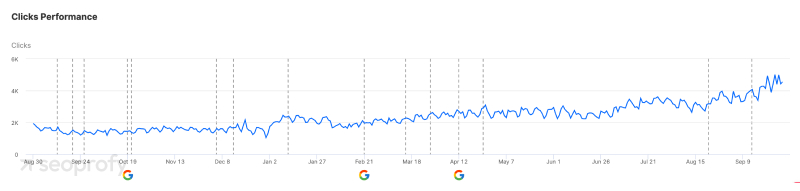

Results

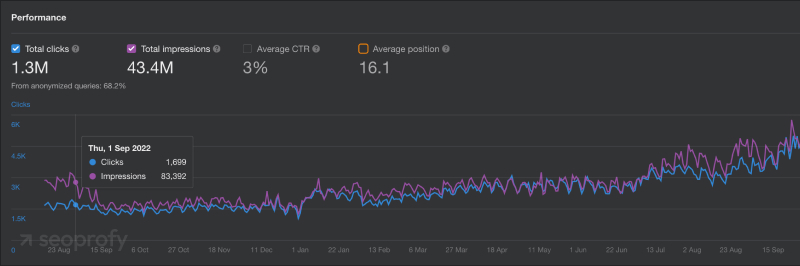

Today, our cooperation with this client continues. During the last 12 months, we've managed to triple organic traffic. The client has also stated that FTDs (first-time deposits) have doubled.

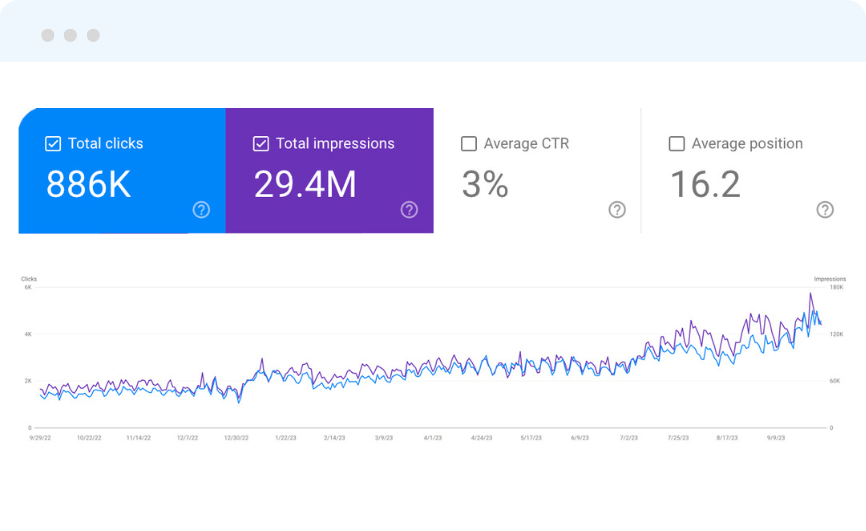

Google Search Console:

Ahrefs+GSC:

Seocrawl:

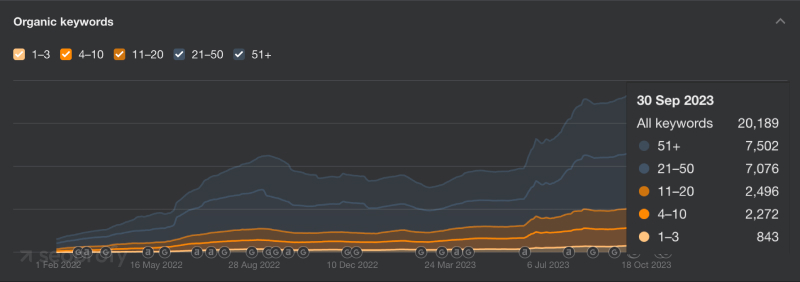

Organic keywords: